Dr. Melissa Hogan

March 19, 2026

Most EdTech impact looks strongest right before it fades.

Early pilots show promise. First-year dashboards trend upward. Case studies highlight encouraging gains. And then, inevitably, results flatten, variability increases, and the story becomes harder to tell.

This isn’t failure. It’s impact decay.

And until the industry is willing to name it, districts will continue mistaking temporary effects for lasting change, often right as renewal decisions are being made.

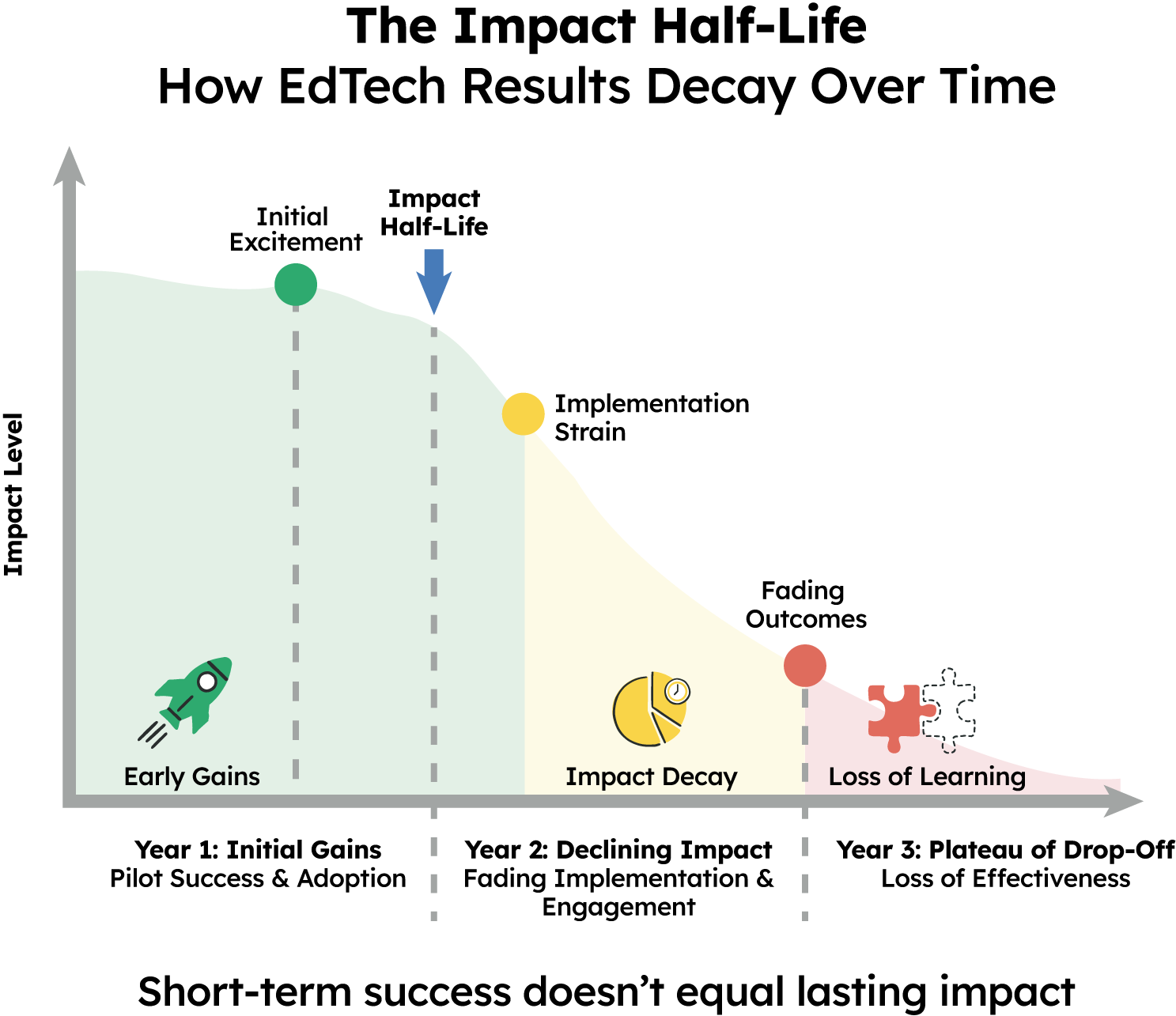

In science, a half-life measures how long something remains effective before it diminishes. Impact in education behaves the same way.

Impact Half-Life refers to the length of time learning gains persist once novelty wears off, implementation scales, and instructional routines are tested by real-world constraints.

If gains disappear by year two, the impact didn’t endure. It expired.

EdTech results frequently peak during:

These conditions matter, but they are rarely permanent.

As tools scale across more classrooms:

Without systems explicitly designed to sustain impact, early gains erode, not because the idea was flawed, but because durability was never measured or engineered.

Renewals often rely on the same evidence used to justify adoption, sometimes years later.

But evidence has a shelf life.

When districts renew based on:

They assume impact is still present, even though implementation conditions have changed.

This creates a dangerous lag between reality and decision-making. By the time decline becomes visible, districts are already invested and students have already paid the cost.

True instructional impact should:

Durability isn’t an add-on metric. It’s the difference between a promising intervention and a dependable one.

If impact can’t survive scale, it can’t justify renewal.

Assessing Impact Half-Life requires a different orientation toward evidence.

It means asking:

These questions are harder than reporting a single outcome. But they are the questions districts must answer to protect instructional coherence and long-term student growth.

When impact decay goes unnoticed:

The problem isn’t that EdTech doesn’t work. It’s that working once is not the same as working sustainably.

The future of EdTech impact will not be defined by who shows the biggest early gains.

It will be defined by who can demonstrate:

Because impact that expires before renewal was never impact. It was momentum.

Evaluate whether impact persists, not just whether it appeared.

Ask vendors to show multi-year or cohort-over-cohort outcomes and to explain what systems support sustained gains once early implementation support fades.

At this point, the pattern is clear. The problem isn’t a lack of data, it’s how impact is defined, measured, and communicated. The final post brings our series together, outlining what a higher standard for impact looks like and what districts should demand next.

For definitions and key terms used throughout this series, see the Impact Reality Series Glossary of Terms.